This post is intended to cover the VCAP-DCA objective of understanding the various RAID levels that you will come across when working with vSphere storage. There are already some great resources on RAID available on the Internet, so the aim here is to go over some of the more common RAID configurations and look at their benefits and weaknesses. The most commonly used RAID levels used with vSphere are RAID 0, 1, 5, 6 and 1+0. RAID 0 RAID 0 is striping only, without redundancy.

The data is striped over all disks in the RAID group. A RAID 0 configuration requires a minimum of two disks, and has the following characteristics:. Very Good Performance. Allows for all disk capacity to be used for storage. However;. There is no redundancy.

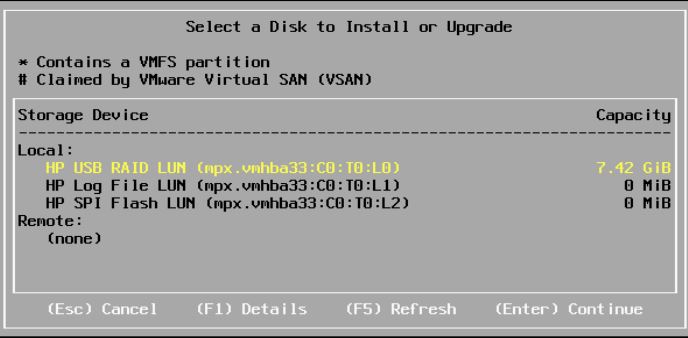

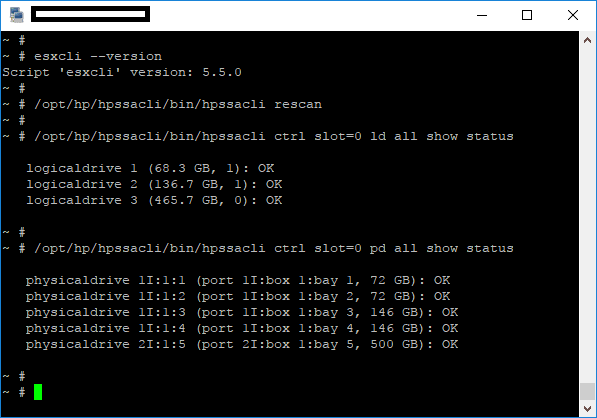

Last Update: Show configuration ESXi 5.5 -> /opt/hp/hpssacli/bin/hpssacli ctrl all show config ESXi 6.5 -> /opt/smartstorageadmin/ssacli/bin/ssacli ctrl. I followed the instruction 'How to install and configure an ESXi 6.5 host', but after installing my System doesn`t find the Hard-Disk to boot from. I Build a Raid 6. The installation of the ESXI started only if i put the correct Adaptec Raid Driver into the virtual Storage 2 and follow the steps like in the instruction.

A single drive failure will destroy all data on the RAID volume. RAID 1 RAID 1 is mirroring data across disks. For example, if you have two disks, the same data is on both disks. A RAID 1 set requires a minimum of two disks. If a disk fails, there is a copy of the data on the other disk ready to take over.

Minimal write performance degradation. But;. You lose half of your total capacity. If you have two 1 TB disks, you will only have approximately 1 TB usable capacity. RAID 5 RAID 5 is striping with parity.

Data is striped across all disks in the RAID set along with the parity bits. RAID 5 requires a minimum of three disks. Can afford to lose one disk in the set without loss of data. Excellent read performance. However;.

Write performance less than that of RAID 1 due to the need to calculate the parity. When a disk fails performance will be degraded. RAID 6 RAID 6 is striping with double parity. Like RAID 5, data is striped across all disks in the RAID set, along with double parity. This means the minimum number of disks required is four. Can cope with the loss of two disks in the RAID set without data loss. Excellent read performance.

But;. More disk space is used up due to the additional parity, resulting in less usable capacity. Write performance is not as good as either RAID 1 or RAID 5 due to the requirement of calculating the double parity.

RAID 5 and 6 are good, cost effective, options providing both performance and redundancy. It’s best for applications that are heavily read orientated due to the impact the parity calculations have on write performance. RAID 1+0 Otherwise known as RAID 10, this is combining mirroring with striping. Disks in a RAID 10 group are mirrored then striped across more disks. This means the total number of disks must be an even number, with the minimum number of disks in a RAID 10 set being four.

Excellent read and write performance. Can cope with many drive failures, so long as all disks in the set do not fail. However;. Only 50% of the total capacity of the disks is available due to the mirroring. RAID 10 is the best option for mission critical applications due to the performance and redundancy it offers. Calculating Disk Performance Requirements Typically RAID 5/6 is used for operating system data and application data, with RAID 10 being used for data that has a high volume of changes such as transaction logs.

I tend to see the most datastores on RAID 5 volumes with some datastores on RAID 10 to satisfy the performance requirements of applications such as SQL and Oracle. Whichever RAID you decide to use for virtual machine workloads its important to ensure there are enough spindles in the RAID set to satisfy the demands of the virtual machines.

We have covered the in past posts and how extremely useful it can be loaded on ESXi servers that have DAS volumes being presented with an Avago/LSI RAID controller. The advantage of loading/using the Avago/LSI StorCLI utility is we don’t have to reboot the host to start using it as the VIB install doesn’t require a reboot.

If you run a server with a PERC adapter as most know, these are based on Avago/LSI adapters. However, the vanilla StorCLI utility may not work, especially with 14G servers with the newest PERC adapters installed. If you are not already aware, Dell maintains a customized version of the StorCLI utility called PERCCLI that is available for downloaded with compatible systems. In working recently with a new 14G PowerEdge R740 server loaded with running a DAS setup, I was able to pull down the PERCCLI utility for ESXi 6.5 and install on the host to manage RAID. In this post we will walk through how to install and manage Dell RAID in VMware ESXi 6.5 with PERCCLI utility. Install Dell PERCCLI Utility in VMware ESXi 6.5 The process to install Dell PERCCLI Utility in VMware ESXi 6.5 is the same as installing the Avago/LSI StorCLI, however, below is a quick run through of installing the PERCLI on a 14G Dell PowerEdge R740 running VMware ESXi 6.5 U1. To obtain the PERCCLI utility, simply plug in your service tag on the Dell Support site, and select either VMware 6.0 or VMware 6.5 for the operating system.

Note the format is a tar.gz package. It is extremely easy to install and manage Dell RAID in VMware ESXi 6.5 with PERCCLI. The utility is a simple install with no reboot required which is fantastic. Additionally, we can pull a wealth of information using the command line within our VMware ESX 6.5 host as shown in the example above. Additionally, the Dell PERCCLI utility is available for the newest Dell PowerEdge 14G servers. If using Dell hardware, this is your go to utility for managing RAID within VMware ESXi.

Don’t rely on the latest with Dell hardware as in my testing, it is not able to interact properly with Dell PERC RAID adapters especially in the newest PowerEdge servers.